A bit tardy with my first take on Genos2. I’ve spent waaay too much time on forums and need to get back to work. 🙂

Genos2 information and videos abound on the Web, so I’ll be skipping a lot of details here. I recommend getting your information from reputable sources, not the self-appointed experts on Internet forums. Given the misinformation that I’ve seen, I don’t think some of these people have ever touched an arranger keyboard, let alone Genos1 or Genos2.

It will be some time until I can actually get hands-on with Genos2. That’s a disadvantage of living in North America where guitar is king. When I do play Genos2, I will post comments. So, please take my initial opinions with a grain of salt.

Genos2 leaves me feeling a bit like Dr. Jekyll and a little bit Mr. Hyde, depending upon Genos2 being your first top-of-the-line (TOTL) arranger or an upgrade from Genos1.

Let’s hear from the kindly doctor first.

Your first TOTL

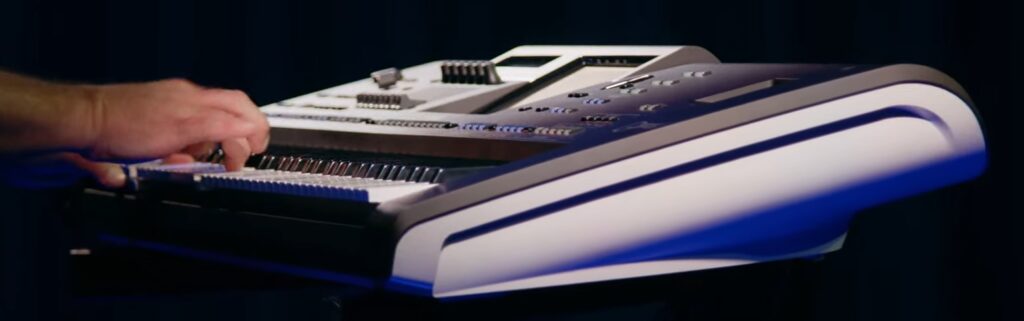

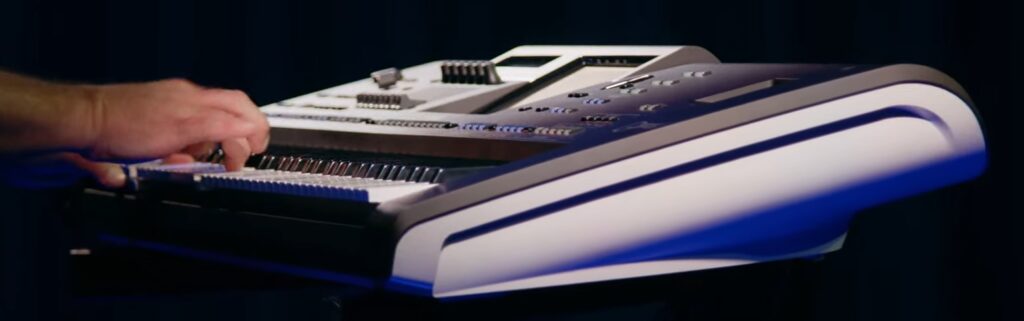

If Genos2 is your first TOTL arranger, you’re on good ground. Genos2 builds on the solid Genos1 foundation. Genos1 has been a reliable, great sounding instrument and I’m sure you won’t be disappointed in G2.

Genos2 adds many new voices and styles to Genos1. (Some of the Genos1 voices and styles are available with the Genos2 Complete Pack, free after registration.) I made a list of the new Genos2 voices.

Genos2 significantly improves on the G1 CFX piano. It has more strike (velocity) levels, now 7 levels up from 5. The sustain is longer (doubled). Check out this video which focuses on Genos2 pianos. The G2 piano sounds are lovely.

Like the Montage upgrade, G2 received “character pianos“:

- Character piano: A rough and wooly sound (think “ragtime”)

- Cinematic piano: An air of mystery about it (think “Halloween”)

- Felt piano: A sound softened by felt woven in the strings (think “Titanic”)

Unlike Montage M, all of these pianos are enriched by the stunning, new REVelation reverb from Steinberg. Genos2 also adds a new multi-band compressor.

Genos2 adds Ambient Drums to the original Genos1 Revo drums. (Ignore the Internet misinformation about Revo being dropped.) Ambient Drums mix close-mic’ed samples with room ambience samples consistent with sampling techniques employed in modern percussion VST libraries. You (or the style) dial in the amount of ambience, thereby adjusting the sense of space in the sound.

One shouldn’t forget the new true FM voices. Yamaha enabled the FM-X hardware in the Genos2 tone generators. [BTW, the FM hardware is locked away in Genos1.] Now you get real dynamic FM sound. Genos2 does not support FM voice editing, but, really, how people are going to create FM voices from scratch? Not to mention how notoriously hard it is to get one’s mind around FM programming. A free DX7 expansion pack awaits those who register. With a little deep diving, I can safely say there is real FM-X in there.

No doubt, Yamaha have produced new styles and revamped old styles to use the new effects and voices. There are now 800 styles, which in itself, is a staggering big MIDI phrase library.

Ambient Drums illustrate the Genos ethos — producing a refined, “like the recording” sound. I’m sure this gives hobby players a lot of pride and pleasure. I like it because I can produce great sounding demos without a lot of effort!

Genos2 includes other enhancements worth mentioning. The style Dynamics Control improves on G1 dynamic control. The new Dynamics Control provides knob control over the volume and velocity of style parts, letting the backing band more realistically sit out or dig in. The front panel adds two more assignable buttons (3 total above the articulation buttons) and two buttons to control the ever-useful Chord Looper.

If you don’t own a Genos and want one, buy it. Given Yamaha’s long development cycles, it may be five or six years before the next major Genos release.

Upgrade to Genos2?

The decision to upgrade from the previous model is always a difficult one, whether its Montage M, MODX+, Genos2, Korg, Roland, whatever. There might be a few of us who are made of money, but most of us punters need to lay off old gear in order to afford the new. If it’s a trade-in or a re-sell, we’re going to lose value and we’re going to pony up cash for the shiny new object. In the case of a premium product like Genos2 or Montage M, the delta might be $1,800 or more. And then there’s the hassle of dealing with the villains on Craigslist or Ray’s Music Exchange.

This is when and where Mr. Hyde makes an entrance.

The decision to upgrade is a personal decision and choice. Objectively, does the delta enable us to meet our personal musical goals, that is, fulfill a genuine need? Otherwise, I cannot objectively account for enthusiasm, fan-dom, FOMO, or just plain desire (G.A.S.).

Which leads me to…

Generation skipping

When it comes to electronics, I’m a “generation skipper.” I rarely buy the next generation of anything. I don’t find the value proposition — increased utility per upgrade dollars — to be enough to justify a purchase.

So it is with Genos2. My Genos1 is still a rockin’ keyboard. It isn’t used up in the economic sense.

By the way, now is a terrific time to buy a new old stock (NOS) or re-sale Genos1. North American retailers have not sold through and are selling NOS Genos1 at a reduced price. [I took my own advice and have made a deal for an NOS Clavinova CSP-170.] European customers are switching to Genos2 in droves and they need to unload their Genos1 keyboards in order to fund a new G2. Buy a reduced price Genos1 now and upgrade to a Genos3 later. Many different ways to make a play.

Need over want

What would it have taken to make me decide otherwise and buy Genos2? Or, letting Mr. Hyde loose, what is Genos2 missing?

Right now, my most pressing need is an 88-key piano action keyboard for practice. I need to raise my piano skills and I need to transition to an acoustic grand when necessary. The FSX action is not up to snuff — I’ve tried with Genos1.

Compared to Clavinova (for example), Genos2 is missing:

Even Montage M8X left me up short.

What really disappointed me is the other biggee — no Virtual Circuit Modeling (VCM) rotary organ simulator. This is a big omission as far as upgrade is concerned. With two really fine synth-action instruments (Genos1 and MODX) in hand, I just can’t justify an upgrade to G2 based on what G2 is and isn’t today.

Yamaha product silos

Looking at Montage M and Genos2, Yamaha’s product silos get in the way of making all-rounder keyboards. Yamaha product groups protect their turf and abhor cannibalized sales. This attitude and market strategy drives a lot of customers crazy, including me.

Reading the forums, there is demand for an 88-key Genos. The P-S500 is not enough to scratch the arranger itch, DGX-670 is feature-light and CVP prices are way out of sight.

Yamaha need to pick up the pace and roll out new features faster. Will Genos2 people need to wait five years to get the VCM rotary sim, Bösendorfer piano, or VRM? At age 72, I’ve got about 11 years left (male, life expectancy, U.S.A.) Let’s get going, Yamaha! 🙂 My time is running out…

Copyright © 2023 Paul J. Drongowski