The Yamaha Montage synthesizer is now hitting stores in North America. One of the local retailers (GC in Natick) have a Montage set up for demo. Let’s go!

The demo unit is a Montage8 with the 88-key balanced hammer effect keyboard. I have always liked Yamaha’s upper-end “piano” actions and the Montage8 is no exception. I primarily play lighter “synth” action keyboards like the MOX and the PSR-S950. Fortunately, I spent the previous week working out on the Nord Elecro 2 waterfall keyboard, which requires a slightly heavier touch. I played the Montage8 for a little bit more than an hour without my hands wilting — a good sign.

First off, the demo unit was plugged into two Yamaha HS7 monitors and a Yamaha HS8S subwoofer. GC usually patches keyboards through grotty keyboard amplifiers, so I suspect that Yamaha provided the monitors in order to create the best impression of the Montage. I was dismayed when I started off with a few B-3 organ patches and could not contain the low end. The front panel EQ simply didn’t do the job. Time to check the monitor settings. The HS7s were flat, but the HS8S subwoofer level was cranked. After backing off the sub, all was right with the world.

Yes, some people like to simulate small earthquakes with subsonic frequencies. This, however, is not conducive for acoustic music. It’s not conducive for peaceful co-existence with your bass player either. If you encounter a Montage in the wild, check the EQ before proceeding!

So, as you may have gathered already, this is not a review of Montage for EDM. I took along my church audition folder (covering gospel to contemporary Christian to traditional and semi-classical music) and a small binder of rock, jazz, soul and everything in between. I’d like to think that this is the first time anyone has played “Jesu, Joy of Man’s Desiring” on the Montage, however poorly.

The electric pianos are terrific. I had a fine old time playing soul jazz and what not. Great connection between keys and sound. Comparing against Nord Stage, I would say that the Montage is top notch in this department and definitely a cut above the old Nord Electro 2. Yamaha did not put the Reface CP (Spectral Component Modeling) technology into Montage; they didn’t need to.

Tonewheel organ is still Yamaha’s Achilles’ heel. There is some modest improvement, but the Montage is not in clone territory. In this area, I would say, “Advantage Nord.” If I can cover B-3 with the MOX on Sunday, I’m sure that the Montage is up for medium duty. However, the tonewheel organs lack the visceral thrill of the EPs. I will say that the 88-key action did not inhibit my playing style too much. (If I was going to buy a Montage, tho’, it would be a 6.)

The pipe organs got some tweaks, mainly by enhancing the Motif pipe organ sounds via FM. There are a few lovely patches, but I will still look to the Tyros (and the PSR expansion pack) for true realism. The Nord Electro 5d has modeled principal organ pipes where the drawbars change the registration. Ummm, here, I would give the edge to Nord. Plus, the pipe organs in the Nord sample library are more on par with the Tyros and PSR expansion pack. Hate to say it: Montage pipe organs are good “synthesizer pipe organs,” and that ain’t entirely a compliment.

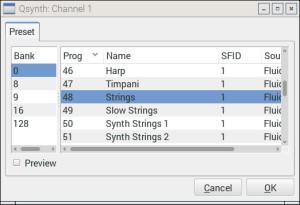

The new strings are wonderfully realistic, especially for solo/melody lines. I really enjoyed bringing sections in and out dynamically. (The expression pedal was sync’ed to the SuperKnob.) With the changes in our music ministry group, I’ve been playing more melodic and exposed parts. I could really dig playing a reflective improvisation for meditation using the strings and woodwinds under Motion Control.

The classical woodwinds got a boost in Montage, too. The woodwinds are all excellent although the sonic delta above Motif XF (MOXF and MOX, too) was not as “Wow” as the strings. Most likely, my ears were getting tired at that point…

Since I was losing objectivity, I just briefly touched on brass. I need good French horns and Montage did not disappoint. I wish that I had spent time with the solo trumpets and trombones, but my ears were telling me to knock it off.

The new Telecaster (TC) is quite a treat. The “Real Distortion” effects (Motif XF update 1.50) are now standard and the programmers made good use of them. I wish that the Montage had the voice INFO screen from the PSR/Tyros series. The INFO screen displays playing tips and articulations for each voice. This makes it a lot easier to find and exploit the sonic “Easter eggs” in the patches. (“Play AF1 to get a slide. Play AF2 to get a hammer on.”)

Fortunately, it was a rainy Saturday afternoon and the store was empty — disturbed only by the occasional uncontrolled rugrat pounding on some poor defenseless keyboard. Overall, I felt like I really heard the Montage and could make a fair evaluation.

I did not dive into editing, arpeggios, motion sequencing, recording, etc., so this is surely not a comprehensive review. Anyone spending less than one month with this ax cannot claim “comprehensive.” It just ain’t possible, so I would call my initial opinion, “first impressions.” That said, I can see why the Live Sets are important. I mainly dove in through Category Search where some of the touch buttons are a wee too small. Punching up a sound in full combat requires BIG buttons.

Montage looks, feels and sounds like a luxury good. Montage is also priced like a luxury good. The Montage8 MAP is $4000 USD. It is quite a beast physically and I would most likely go for the Montage6 at a “mere” 33 pounds and $3000 USD. None of the Montage line would be an easy schlep, especially when I have to buzz in and out of my church gig fast.

Would I buy one? Tough call. On the same field trip, I got to sit in a Tesla Model S ($71,000 USD) — a luxury car built around a computer monitor or two. I just recently bought a Scion iM (AKA Toyota Auris, Levin, Blade, whatever) for about $20,000 USD. Both cars could get me to the gym and back. I like my iM. What does that say about me as a customer? Do you think I would buy a Montage? Enigmatic.

See the list of new waveforms in the Montage. Also, check out the latest blog posts! Update: May 10, 2016.