Right from the git-go, I thank Guitar Center Lynnwood for letting me plink away at an Arturia AstroLab. I dropped in to play before sufficient caffeine had kicked in and I wasn’t really feeling the music. Nonetheless, I was glad to actually play an AstroLab and not watch its demo videos. 🙂

AstroLab and I are not quite ready for each other yet. I really need the Augmented Brass and Augmented Woodwinds. The Augmented series are pretty sweet instruments and acoustic woodwinds, especially, are essential to what I play live. Still, thanks to playing AstroLab now, I will keep AstroLab on my radar screen.

AstroLab or Analog Lab?

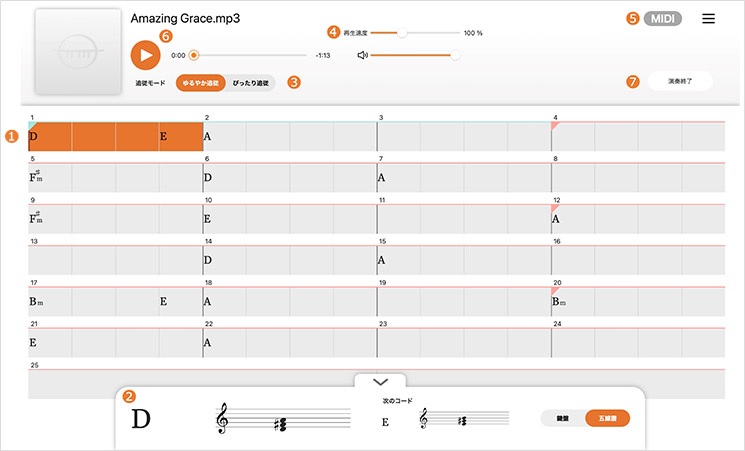

In case you haven’t watched a few videos, AstroLab is Analog Lab in a stand-alone keyboard. That’s the short of it. Sitting down to AstroLab is exactly like sitting down with Analog Lab. Most of Lab’s collection of instruments are there along with a number of recognizable presets. I couldn’t perform a systematic check, but I believe Arturia have cooked up additional presets for AstroLab itself.

The big elephant in the room: If you have Analog Lab (or V Collection) on a laptop, why AstroLab? AstroLab is not cheap at $1,599 USD (MAP/street). You can get a pretty decent laptop and Analog Lab for $1,600. For that matter, you can buy Analog Lab V bundled with a KeyLab Essential MK3 for only $270 (MAP). Add on a laptop and you’re ahead of the game. [Analog Lab V is integrated brilliantly with the KeyLab controller series, BTW.] So, AstroLab needs to have significant value-added.

From my own perspective, I’m not wild about using a laptop in a live church setting. I once had a laptop balk in church and when I’ve got to play, I’ve got to play. I like the idea of Analog Lab running on a reliable, dedicated keyboard. On the down-side, AstroLab gives up KeyLab’s sliders, having no sliders at all. (To me, pads are MEH.) On the up-side, working at AstroLab is Analog Lab V déjà vu. And that’s a good thing…

Build

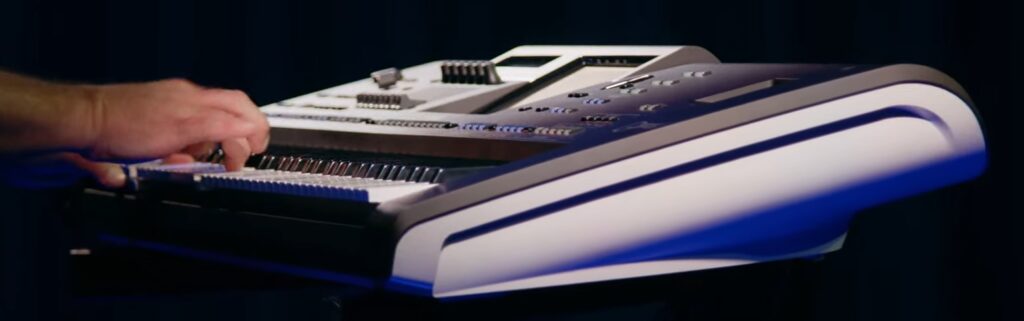

AstroLab is an attractive looking instrument. Visually, it would grace any stage. Dimensionally, it is not much wider than the 61-key keybed and, as a result, is quite compact. That’s why AstroLab’s heft is so surprising. At 22 pounds (10 kg), this instrument has some weight and you won’t be throwing it around with palm swipes. The KeyLab Essential 49 and 61 are only 6.1 and 6.8 pounds, respectively. I love my KeyLab Essential 49 for its light weight and portability. Thus, the relatively weighty AstroLab is a double surprise.

The build, encoder knobs, buttons and keys feel solid and durable. The integrated display knob, not so much. The display knob — what Arturia calls the “Color Screen Encoder” — feels like it rocks around and I feared a bit for its safety in my under-caffeinated hand. I would rather confirm selection with one of those solid-feeling buttons near the display knob.

One near-term suggestion: Ditch the cheesy-assed music stand shown on the Arturia AstroLab “Details” web page. It’s not up to the usually superb Arturia design aesthetics.

Display and UI

I was quite amazed by the amount of detail shown by the color display. You can easily recognize all of the virtual synths and keyboards by their familiar appearance. I didn’t have any trouble reading the instrument, patch, and parameter names. Text is legible.

As to the user interface (UI), you can dial around the menu system (literally). Patches are organized by virtual instrument, sound category (strings, etc.) and artist. The artist category lets you dial in a patch based upon a popular song or what have you, a feature that will be welcomed by cover-band players.

Arturia need to divide a few categories into separate, smaller categories. For example, brass and woodwind instruments are in the same, combined category. In order to find a clarinet, I must scroll through a bunch of brass instruments. It’s bad enough to scroll through a list of instruments, but, hey, lets focus the list and reduce scroll time. How ’bout separate categories for woodwinds and brass?

Splits and layers

My only other source of frustration was trying to create a split. I didn’t have time to browse the AstroLab manual before heading down to the music shop. Intuition alone didn’t cut it and I never succeeded in creating a split. Bummer.

Sounds

I took along this week’s music and a few of the jazz tunes that I’ve been working on. Genre-wise, it’s pop, not electronica.

I was pleasantly surprised to find a large number of patches that could fill-in for the Augmented Brass and Woodwind instruments. That’s a relief. Generally, patches sounded bright and I raised this point with the store manager. The staff had compared AstroLab vs. Nord and found more mid- to low-range with the Nord through the same monitors. Tweaks needed?

Some of the acoustic pianos sounded too bright and brittle. Fortunately, they could be tamed with the “Brightness” and “Timbre” knobs. I felt much better once I got into the Augmented Pianos. Nice work, there. I couldn’t assess piano sustain, not having a sustain pedal attached to the demo unit.

As to the keybed, it is comfortable to play. It’s another one of those “Compromise” keybeds which aim for a sweet-spot between acoustic piano, electric piano, organ and synth. I much prefer the AstroLab keybed to KeyLab Essential — by far. The keybed made playing electric piano enjoyable (something I dread on Yamaha MODX6). B-3 organ didn’t feel bad under the fingers, either.

I have only one small, probably correctable, quibble about the key touch. Sometimes a patch wouldn’t trigger (produce any sound) when using my lightest touch. This seemed to occur with synth-y patches and maybe setting the key touch sensitivity would make the instrument more responsive.

I did notice a delay when switching between “sampled” instrument patches. Arturia acknowledge this delay due to sample loading. Plan for it when playing live. Switching between modeled instruments is darned quick. I didn’t notice any drop-outs due to polyphony limits, but then I wasn’t pushing AstroLab very hard with splits or layers.

Conclusion

Earlier, I addressed the big elephant in the room — what you get for what you pay. Arturia want to compete with the Yamahas, Korgs, Rolands and Nords. In terms of build and keybed, they are on par. As to sonic value-added, the AstroLab is a work in progress (WIP).

Arturia are smart enough to know it’s a WIP. They need to fill out the brass and woodwind offerings, for example.

The big value-add is Arturia’s sound design. AstroLab is Analog Lab V (temporarily minus some goodies). If you want Analog Lab V in a dedicated keyboard, you got it now.

Copyright © 2024 Paul J. Drongowski