Contemporary workstation instruments offer several options for combo organ emulation. Every workstation has at least a few internal combo organ waveforms. Korg Kronos, for example, has two Vox organ waves built in. Even the lowly Korg microKorg XL+ has two Vox waveforms (DWGS single cycle).

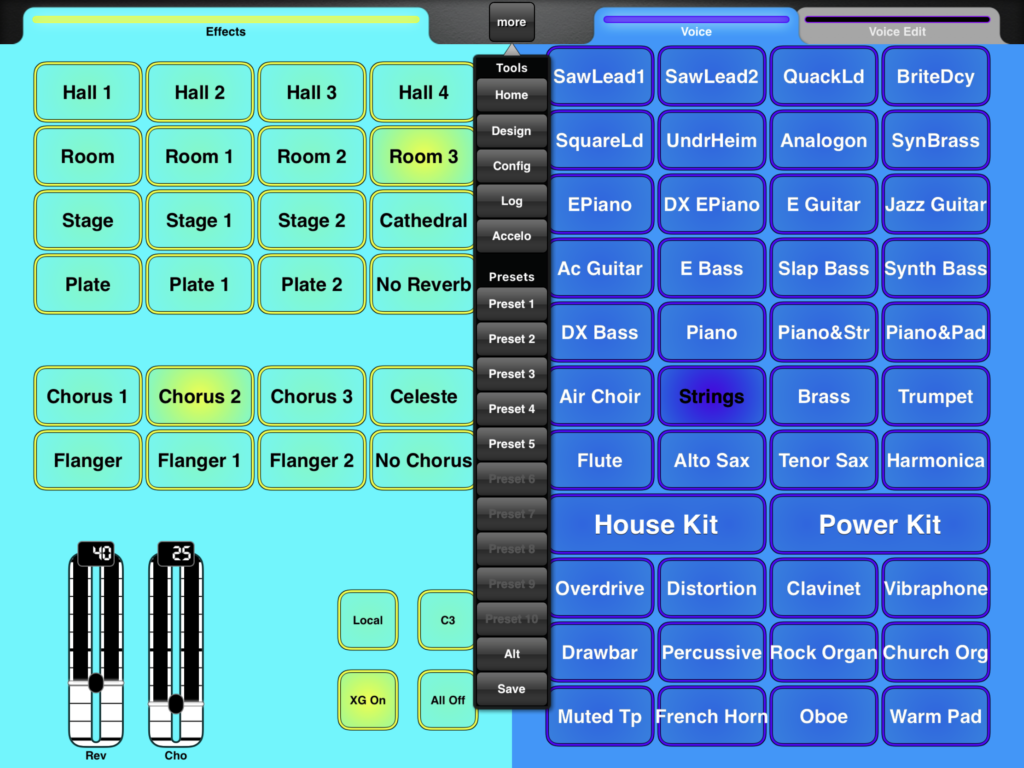

Yamaha MODX and Montage — my focus in this article — have a good variety of Vox and Farfisa waveforms. Yamaha Genos has a lesser endowment as we’ll see. Yamaha Reface YC shares sonic DNA (AWM2 and effects) with the Motif XF and will be the subject of a future post.

Motif, Motif ES and Motif XS

Models in the early Motif series primarily base combo organ patches on two waveforms:

- Portable Electronic: Vox-y tone

- Compact Electronic: Farf-y tone

You can hear these waveforms at work in the Tiny Combo Bars 1 performance and the Tiny Combo Bar 2 performance. The patches layer three are more elements playing Portable Electronic or Compact Electronic (respectively). Each element is filtered differently: low pass, band pass and high pass. The net effect is like several distinctive tab stops or drawbars pulled at once.

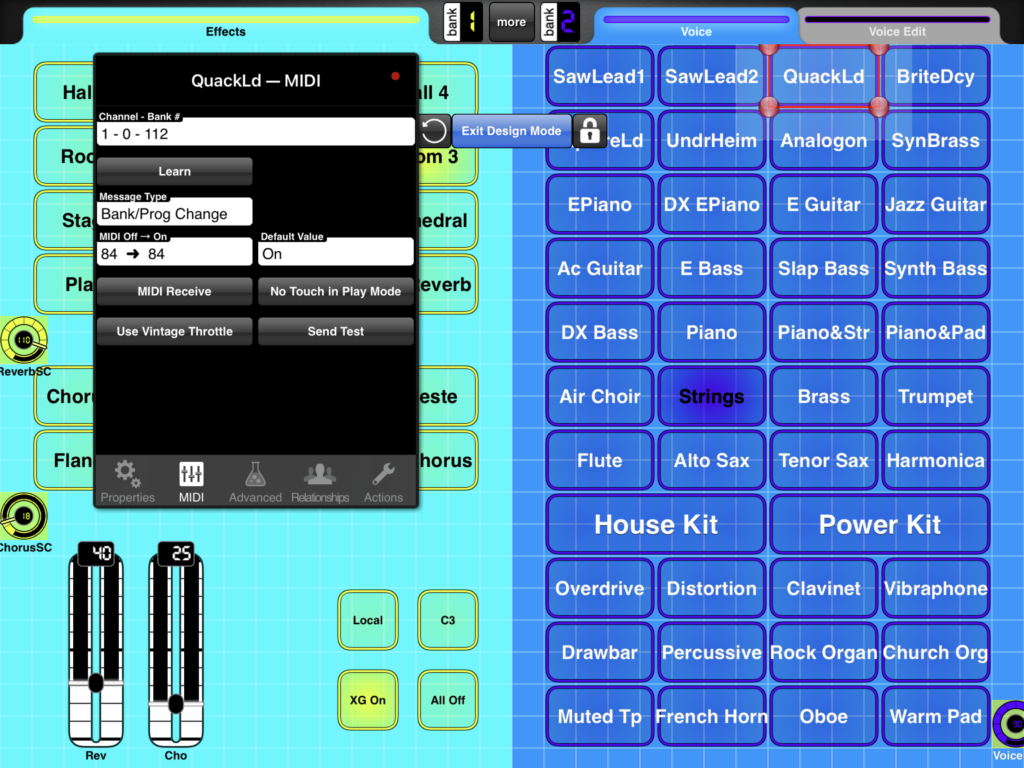

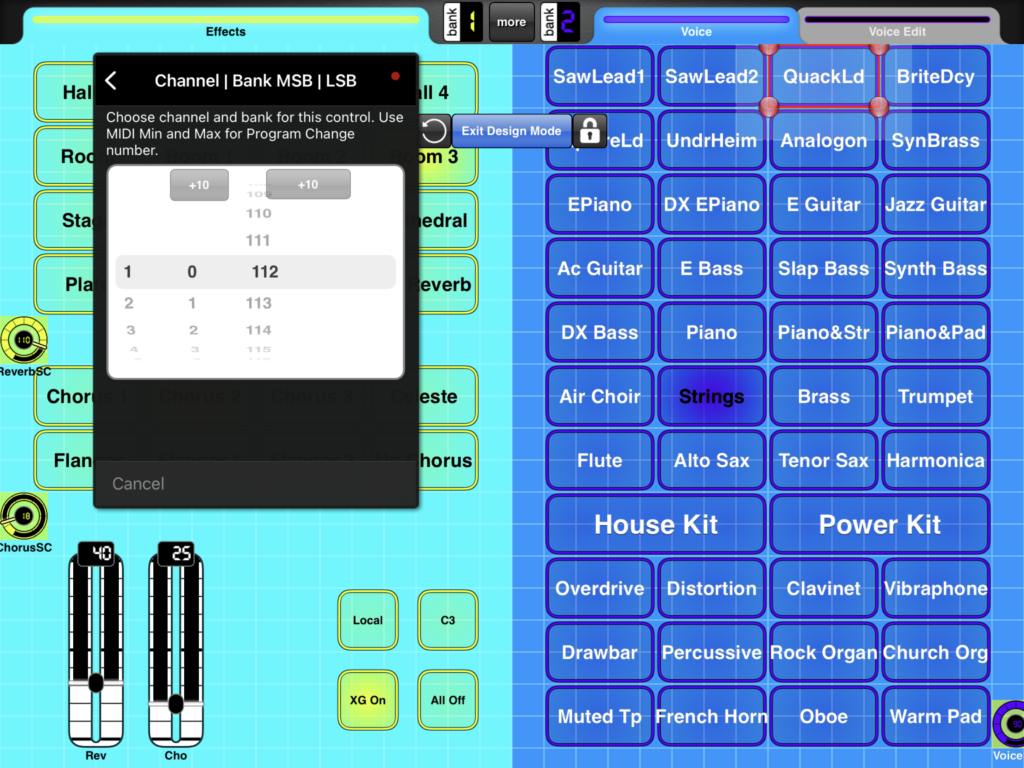

These waveforms are very old, going back to the original Motif (maybe S80) in the early 2000s. I’ll bet dollars to donuts that the Portable Electronic waveform is the basis for the 60’sOrgan voice (MSB: 0, LSB: 116, PC: 18) in PSR and Tyros keyboards. The 60’sOrgan voice was the sole combo organ mainstay in the arranger line for a loooong time.

Motif XF, Montage, MODX

Motif XF got a big shot of combo juice. Motif XF added several combo organ waveforms:

- Fr All Tabs

- Fr Bright Boost

- Fr Flute

- Fr String Lo

- Fr String Hi

- Fr Trumpet

- Fr Piccolo

- Fr Pedal

- Fr KeyOff

- Vx Drawbar1-3

- Vx DrawbarIV

- Vx KeyOff

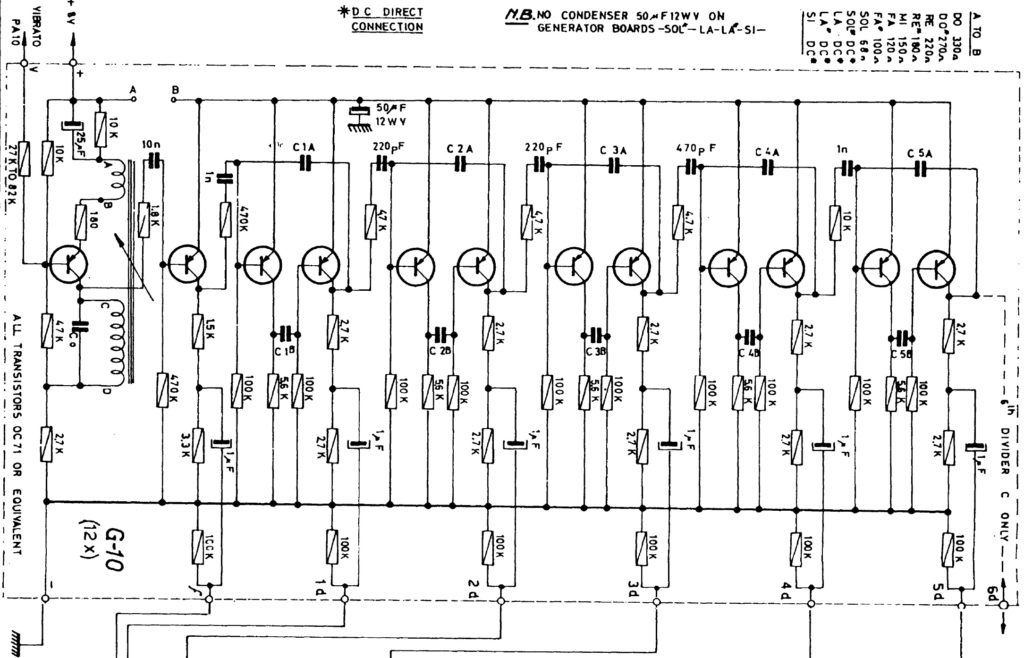

The Farfisa (Fr) waveforms support emulation of specific Farfisa features: individual voice tabs (flute, string, trumpet and piccolo), the wicked Bright Boost knee lever, bass keys (pedal), and key off sound. The Fr All Tabs waveform covers one of the most common use cases — all of the tab stops turned on. Subtlety was not a hallmark of sixties combo organ music. 🙂

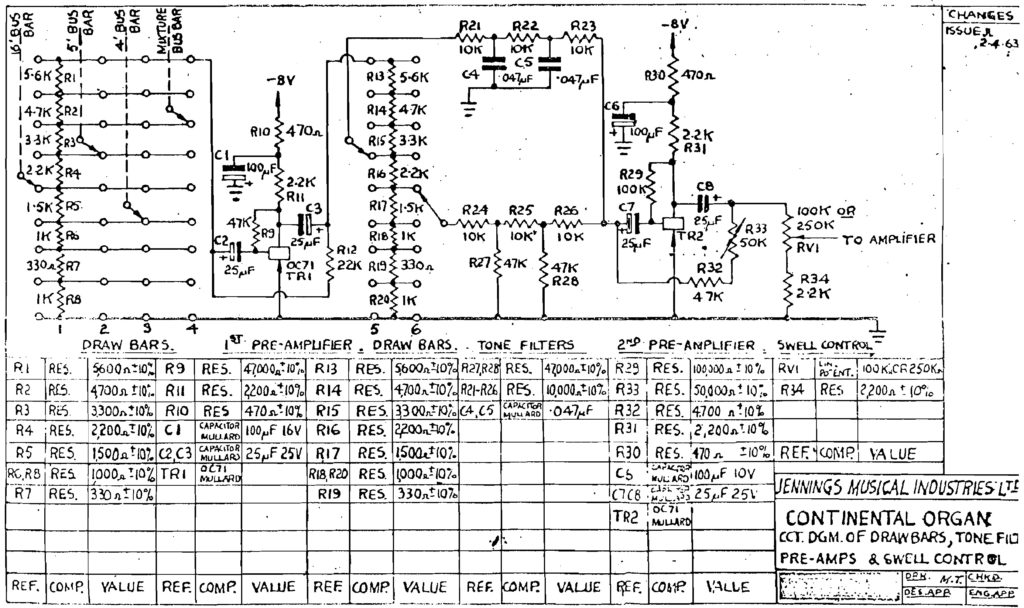

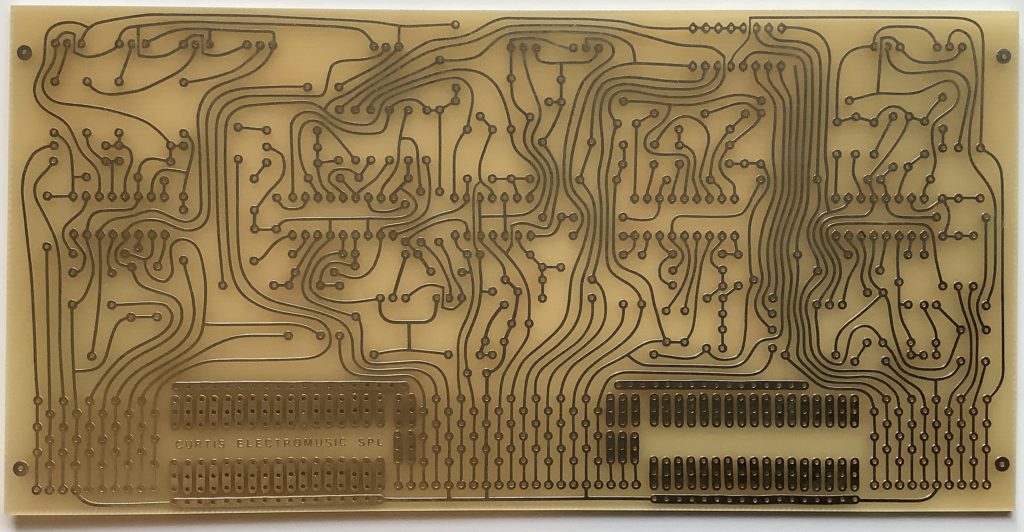

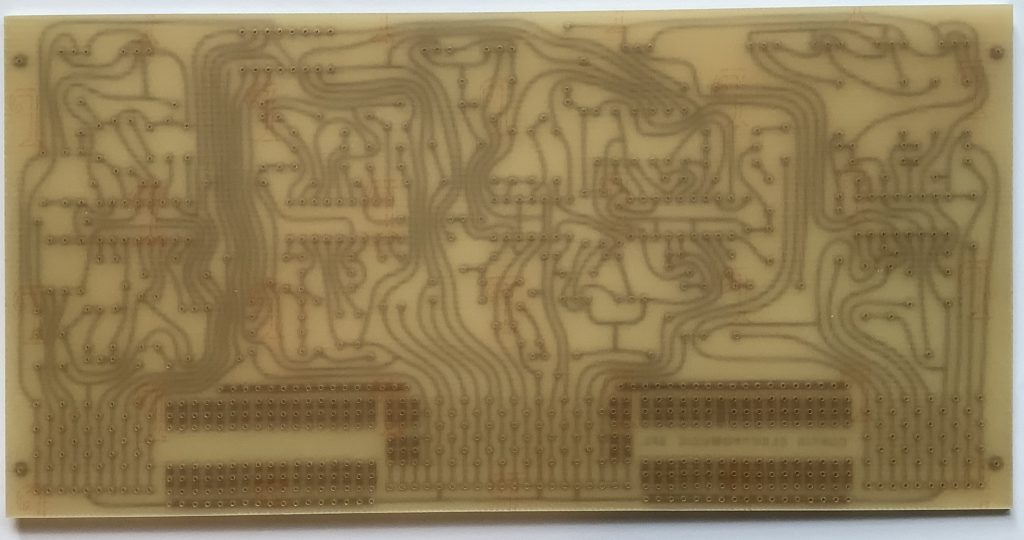

The Vox (Vx) Vx Drawbar1-3 waveform covers the three Continentel footage drawbars while the mixture drawbar is handled by the Vx DrawbarIV waveform. The Vox waveforms include a Vox key-off noise.

In terms of voice programming, one uses note shift to achieve different footage ranks. Passive filtering is emulated through filter type (low pass, band pass, high pass) and cutoff frequency. Of course, everything can be routed into insert effects for distortion, amp simulation, and other grunge.

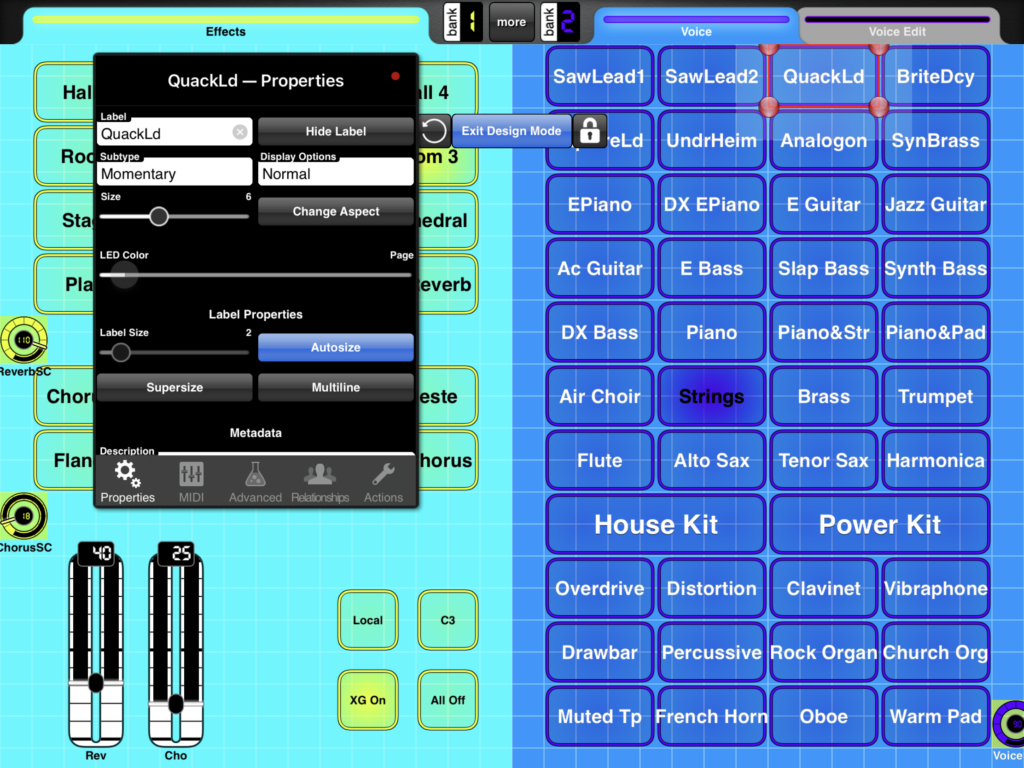

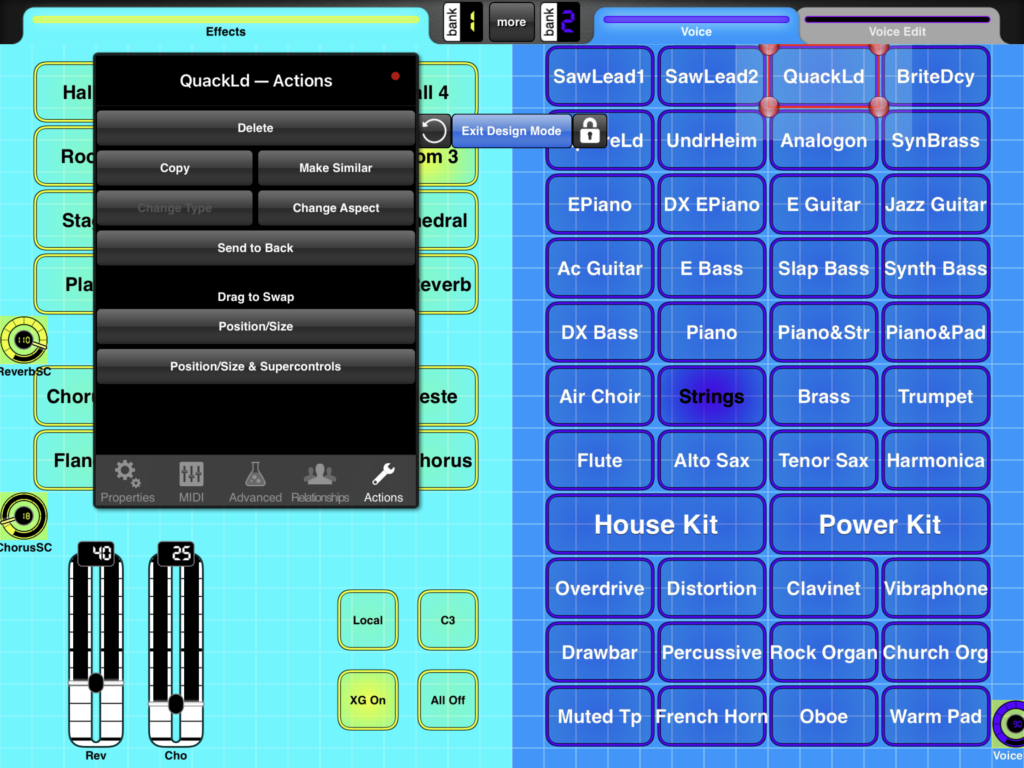

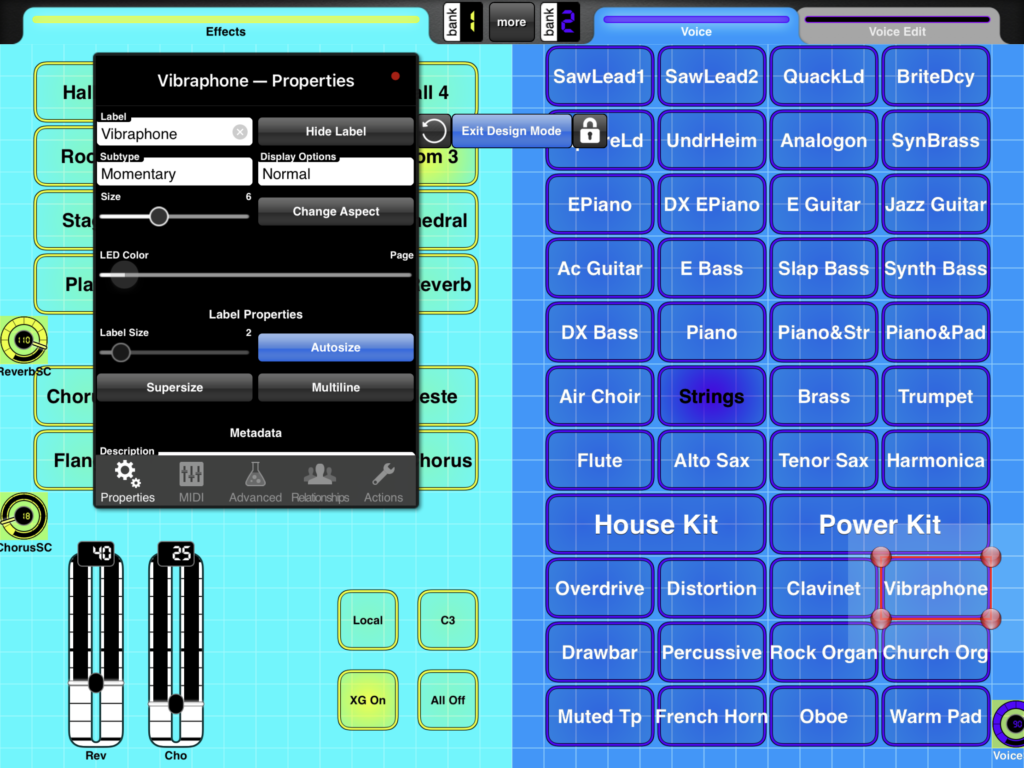

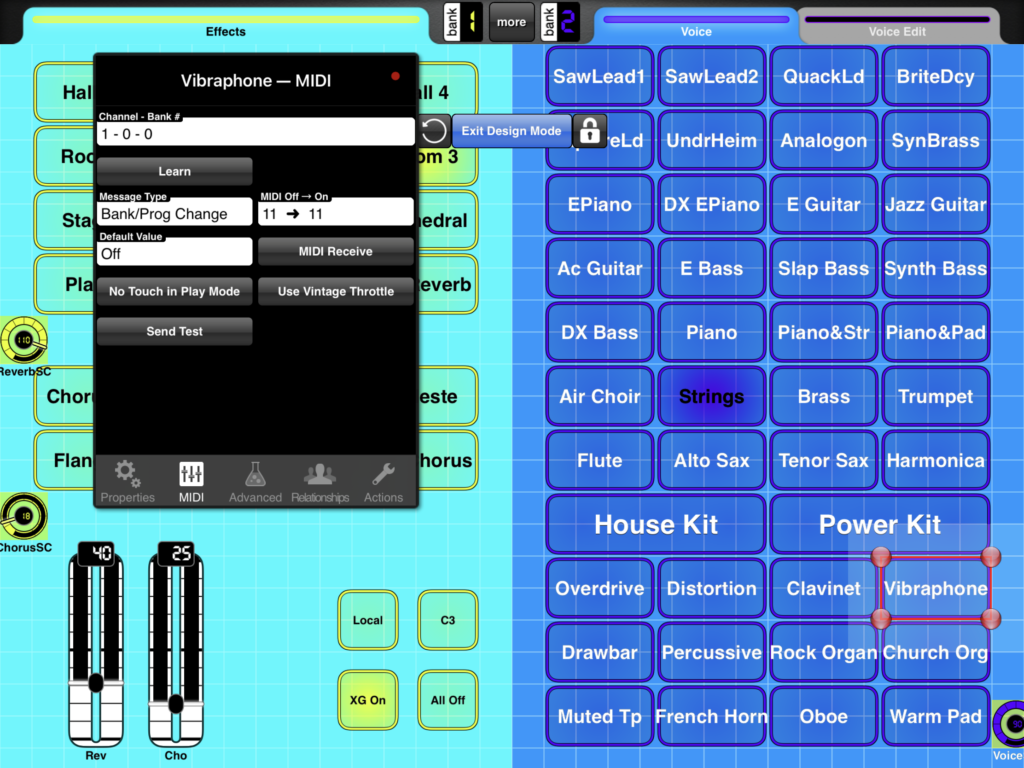

Since Montage and MODX inherit all things Motif, these waveforms and the Motif performances are there for you. The Montage and MODX sliders allow control over individual voice elements. For example, choose the Raspy Tabs performance and assign slider control to element level. The Fr Raspy Tabs waveform-to-element assignment is:

El# Waveform KeyLo KeyHi VelLo VelHi Coarse Level Cutoff XA Ctrl

--- ------------ ----- ----- ----- ----- ------ ----- ------ -------

1 Fr String Lo C2 G8 1 127 0 97 255 Normal

2 Fr Trumpet C2 G8 1 127 0 82 255 Normal

3 Fr Flute C2 G8 1 127 24 120 236 Normal

4 Fr Pedal C-2 B1 1 127 0 127 160 Normal

5 Fr String Hi C2 G8 1 127 19 24 236 A.SW2 On

6 Fr KeyOff C2 G8 1 127 6 87 80 Key Off

Different tabs are brought in and out by moving the corresponding slider. Assignable switch 2 turns on additional brightness. The low keyboard octaves play the bass (pedal) tones. Overall, this is a fairly controllable representation of a wheezy Farfisa Compact.

Because the sliders are not discrete, you can probably make up Farfisa tones which aren’t entirely authentic. But, really, should one care? 😉

Vox performances have similar control-ability. Here is the waveform-to-element assignment in the Vx Full Bars performance:

El# Waveform KeyLo KeyHi VelLo VelHi Coarse Level Cutoff XA Ctrl

--- ------------- ----- ----- ----- ----- ------ ----- ------ -------

1 Vx Drawbar1-3 C-2 G8 1 127 0 75 255 Normal

2 Vx Drawbar1-3 C-2 G8 1 127 12 77 255 Normal

3 Vx Drawbar1-3 C-2 G8 1 127 24 91 255 Normal

4 Vx DrawbarIV C-2 G8 1 127 0 127 250 Normal

5 Vx KeyOff C-2 G8 1 127 4 68 85 Key Off

The first three sliders control the 16′, 8′ and 4′ Vox drawbar settings and the fourth drawbar controls the Mixture (IV) tone. Go ahead, just everything to eleven. 🙂

Montage and MODX FM

But, wait, there’s more! Montage and MODX have two FM combo voices: BOX FM Combo Organ and FM YC Combo Organ. Although these performances don’t sound authentic to my ears, they provide starting points for further programming. I haven’t heard the YC61 as yet, but I wonder if the YC61 combo emulations can be ported to Montage and MODX?

Genos

As I mentioned earlier, the arranger series has been historically short on combo organ sounds, relying on the old 60’sOrgan voice. Wheezy, raspy Farfisa tones are noticably absent. The 60’sOrgan voice sounds like the Portable Electronic waveform on which the Motif 1967 Keys performance is based.

Tyros 4 and Motif XF were introduced at approximately the same time. They certainly were together in the development lab during late 2009. Tyros 4 added four combo organ voices:

- 60sComboOrgan1: VoxContiComb1_Full_NoVib waveform

- 60sComboOrgan2: VoxContiComb1_Full_VibOn waveform

- 60sComboOrgan3: VoxCombi4NoVib waveform

- 60sComboOrgan4: VoxCombi4NoVib091117 waveform

Voices 1 and 2 capture one Vox Continental drawbar combination (Comb1) and voices 3 and 4 capture a second combination (Combi4).

Voices 1 and 3 are without vibrato. Voices 2 and 4, unfortunately, have an excessive amount of vibrato — almost painfully so. When I use voices 2 and 4 in a MIDI sequence, I dial down the vibrato depth using MIDI CC#77 messages. Vibrato frequency is about 5Hz. I also remove touch sensitivity by setting:

- Velocity sensitivity depth to zero, and

- Velocity sensitivity offset to 114.

An organ voice should not respond to touch (key velocity) — ever.

PSR, Tyros and Genos players shouldn’t forget the “hidden” Italian 60s organ voice (It60’sOrgan) in the GM2 sound set. On PSR and Tyros, you’ll find It60’sOrgan within the Legacy voices Organ subfolder. On Genos, you need to download GM2 and XG user voices to the USER voice folder. (See this thread in the PSR Tutorial Forum.) Of course, you can select

It60’sOrgan from a DAW (MSB: 121, LSB: 2, PC: 17) .

All-in-all, you can get a nice Vox tone out of Genos. Farfisa is still missing in action, tho’. Kind of an odd shortcoming of a keyboard with styles and a user base that want to play popular hits from days past.

In the next post, I’ll compare Reface YC combo organs against Montage/MODX (Motif XF). The result may surprise you.

Copyright © 2021 Paul J. Drongowski